追光

论坛回复已创建

-

作者帖子

-

-

2026-05-05 - 12:00 #131841

追光参与者基于 Draw Things 与 Klein 9B 的 Try-Off(脱衣提取/产品图生成) 精确操作流程,按执行顺序编号,可直接对照复现:

1. 准备原始素材

选择一张人物穿着目标服装的高清图片,要求:服装主体完整、无明显遮挡,光线均匀、褶皱细节清晰,人物姿态自然(避免大幅度扭转导致服装形变)。我这里使用上方穿上的衣服来测试,为了看模型的识别能力特意使用了很模糊的图像,就是上方流程让模特穿衣的衣服。

TRYON [girl]. Replace the outfit with [top pic2] and [bottom pic3] as shown in the reference images. The final image is a full body shot.

Steps: 4, Sampler: DDIM Trailing, Guidance Scale: 1.0, Seed: 956193654, Size: 448×768, Model: flux_2_klein_9b_i8x.ckpt, Strength: 1.0, Seed Mode: Scale Alike, Shift: 3.0, CLIP Skip: 2, LoRA Model: flux_klein_9b_virtual_tryon_lora_lora_f16.ckpt, LoRA Weight: 0.6 {“c”:”TRYON [girl]. Replace the outfit with [top pic2] and [bottom pic3] as shown in the reference images. The final image is a full body shot.”,”clip_skip”:2,”lora”:[{“model”:”flux_klein_9b_virtual_tryon_lora_lora_f16.ckpt”,”weight”:0.60000002384185791}],”mask_blur”:2.5,”model”:”flux_2_klein_9b_i8x.ckpt”,”profile”:{“duration”:112.83979708333209,”timings”:[{“durations”:[4.3224007916578557],”name”:”text_encoded”},{“durations”:[1.4052715833386173],”name”:”controls_generated”},{“durations”:[1.0863640000025043,26.82145699999819,25.991885708324844,26.217273291666061,26.093666458342341],”name”:”sampling”},{“durations”:[0.89510183333186433],”name”:”image_decoded”}]},”sampler”:”DDIM Trailing”,”scale”:1,”seed”:956193654,”seed_mode”:”Scale Alike”,”shift”:3,”size”:”448×768″,”steps”:4,”strength”:1,”uc”:””,”v2″:{“aestheticScore”:6,”batchCount”:1,”batchSize”:1,”causalInference”:0,”causalInferencePad”:0,”cfgZeroInitSteps”:0,”cfgZeroStar”:false,”clipSkip”:2,”clipWeight”:1,”compressionArtifacts”:”disabled”,”compressionArtifactsQuality”:43.100000000000001,”controls”:[],”cropLeft”:0,”cropTop”:0,”decodingTileHeight”:640,”decodingTileOverlap”:128,”decodingTileWidth”:640,”diffusionTileHeight”:1024,”diffusionTileOverlap”:128,”diffusionTileWidth”:1024,”fps”:5,”guidanceEmbed”:3.5,”guidanceScale”:1,”guidingFrameNoise”:0.02,”height”:768,”hiresFix”:false,”hiresFixHeight”:0,”hiresFixStrength”:0.69999999999999996,”hiresFixWidth”:0,”id”:0,”imageGuidanceScale”:1.5,”imagePriorSteps”:5,”loras”:[{“file”:”flux_klein_9b_virtual_tryon_lora_lora_f16.ckpt”,”mode”:”all”,”weight”:0.59999999999999998}],”maskBlur”:2.5,”maskBlurOutset”:0,”model”:”flux_2_klein_9b_i8x.ckpt”,”motionScale”:127,”negativeAestheticScore”:2.5,”negativeOriginalImageHeight”:512,”negativeOriginalImageWidth”:448,”negativePromptForImagePrior”:true,”numFrames”:14,”originalImageHeight”:768,”originalImageWidth”:448,”preserveOriginalAfterInpaint”:true,”refinerStart”:0.84999999999999998,”resolutionDependentShift”:false,”sampler”:16,”seed”:956193654,”seedMode”:2,”separateClipL”:false,”separateOpenClipG”:false,”separateT5″:false,”sharpness”:0,”shift”:3,”speedUpWithGuidanceEmbed”:true,”stage2Guidance”:1,”stage2Shift”:1,”stage2Steps”:10,”startFrameGuidance”:1,”steps”:4,”stochasticSamplingGamma”:0.29999999999999999,”strength”:1,”t5TextEncoder”:true,”targetImageHeight”:768,”targetImageWidth”:448,”teaCache”:false,”teaCacheEnd”:-1,”teaCacheMaxSkipSteps”:3,”teaCacheStart”:5,”teaCacheThreshold”:0.29999999999999999,”tiledDecoding”:false,”tiledDiffusion”:false,”upscalerScaleFactor”:0,”width”:448,”zeroNegativePrompt”:false}}

enhance image quality,

Steps: 4, Sampler: DPM++ 2M AYS, Guidance Scale: 1.0, Seed: 3347794058, Size: 512×768, Model: qwen_image_edit_2511_i8x.ckpt, Strength: 1.0, Seed Mode: Scale Alike, Shift: 2.6555896, Tiled Decoding Enabled: 640×640 [128], LoRA 1 Model: qwen_image_edit_2511_lightning_4_step_v1.0_lora_f16.ckpt, LoRA 1 Weight: 1.0, LoRA 2 Model: qwen_edit_enhance_lora_f16.ckpt, LoRA 2 Weight: 1.0 {“c”:”enhance image quality?”,”decoding_tile_height”:640,”decoding_tile_overlap”:128,”decoding_tile_width”:640,”lora”:[{“model”:”qwen_image_edit_2511_lightning_4_step_v1.0_lora_f16.ckpt”,”weight”:1},{“model”:”qwen_edit_enhance_lora_f16.ckpt”,”weight”:1}],”mask_blur”:1.5,”model”:”qwen_image_edit_2511_i8x.ckpt”,”profile”:{“duration”:182.26425354166713,”timings”:[{“durations”:[9.297907916654367],”name”:”text_encoded”},{“durations”:[2.1289305416721618],”name”:”controls_generated”},{“durations”:[14.229573333330336,47.070210833335295,36.148942625004565,35.926070916670142,36.134694374995888],”name”:”sampling”},{“durations”:[1.3233678333344869],”name”:”image_decoded”}]},”sampler”:”DPM++ 2M AYS”,”scale”:1,”seed”:3347794058,”seed_mode”:”Scale Alike”,”shift”:2.6555895805358887,”size”:”512×768″,”steps”:4,”strength”:1,”tiled_decoding”:true,”uc”:””,”v2″:{“aestheticScore”:6,”batchCount”:1,”batchSize”:1,”causalInference”:0,”causalInferencePad”:0,”cfgZeroInitSteps”:0,”cfgZeroStar”:false,”clipSkip”:1,”clipWeight”:1,”compressionArtifacts”:”disabled”,”compressionArtifactsQuality”:43.100000000000001,”controls”:[],”cropLeft”:0,”cropTop”:0,”decodingTileHeight”:640,”decodingTileOverlap”:128,”decodingTileWidth”:640,”diffusionTileHeight”:1024,”diffusionTileOverlap”:128,”diffusionTileWidth”:1024,”fps”:5,”guidanceEmbed”:3.5,”guidanceScale”:1,”guidingFrameNoise”:0.02,”height”:768,”hiresFix”:false,”hiresFixHeight”:576,”hiresFixStrength”:0.69999999999999996,”hiresFixWidth”:384,”id”:0,”imageGuidanceScale”:1.5,”imagePriorSteps”:5,”loras”:[{“file”:”qwen_image_edit_2511_lightning_4_step_v1.0_lora_f16.ckpt”,”mode”:”base”,”weight”:1},{“file”:”qwen_edit_enhance_lora_f16.ckpt”,”mode”:”all”,”weight”:1}],”maskBlur”:1.5,”maskBlurOutset”:0,”model”:”qwen_image_edit_2511_i8x.ckpt”,”motionScale”:127,”negativeAestheticScore”:2.5,”negativeOriginalImageHeight”:512,”negativeOriginalImageWidth”:512,”negativePromptForImagePrior”:true,”numFrames”:14,”originalImageHeight”:768,”originalImageWidth”:512,”preserveOriginalAfterInpaint”:true,”refinerStart”:0.84999999999999998,”resolutionDependentShift”:false,”sampler”:12,”seed”:3347794058,”seedMode”:2,”separateClipL”:false,”separateOpenClipG”:false,”separateT5″:false,”sharpness”:0,”shift”:2.6555895999999999,”speedUpWithGuidanceEmbed”:true,”stage2Guidance”:1,”stage2Shift”:1,”stage2Steps”:10,”startFrameGuidance”:1,”steps”:4,”stochasticSamplingGamma”:0.29999999999999999,”strength”:1,”t5TextEncoder”:true,”targetImageHeight”:768,”targetImageWidth”:512,”teaCache”:false,”teaCacheEnd”:-1,”teaCacheMaxSkipSteps”:3,”teaCacheStart”:5,”teaCacheThreshold”:0.20000000000000001,”tiledDecoding”:true,”tiledDiffusion”:false,”upscalerScaleFactor”:0,”width”:512,”zeroNegativePrompt”:false}}

2. 加载模型与环境

打开 Draw Things,在模型加载区选择 Klein 9B。若追求极致产品级输出,可额外加载 Try-Off LoRA(非必需,若原生提示词方案已足够)。

3. 导入人物图片

将带服装的人物图拖入画布主输入槽位(Base Image / Image 1),作为提取源。

4. 配置核心参数

Resolution:768×1024 或 960×1280(与原图比例一致,避免拉伸)

Steps:4~8(产品图需更高细节,根据自己需要来)

CFG Scale:2.0~4.0(过低提取不干净,过高易破坏服装结构)

Sampler:DDIM 或软件默认推荐采样器

Denoising Strength:0.6~0.8(若开启图生图模式,控制重绘强度)

5. 输入标准提取提示词

在正向 Prompt 框中精确输入:tryoff extract the dress over a white background, product photography style, no human visible, studio lighting, clean edges, maintain original garment shape(若使用 LoRA,末尾追加触发词如 )

6. 执行生成

确认图片绑定与参数无误后,点击生成。等待模型完成推理(通常4~35步,视设备性能而定)。然后我把模特身上穿的衣服扒下来变成了产品图,结果还算满意。

tryoff extract the full outfit over a white background, product photography style. no human visible (the garments maintain their 3d form like an invisible mannequin)

Steps: 4, Sampler: DDIM Trailing, Guidance Scale: 2.5, Seed: 3105463327, Size: 448×768, Model: flux_2_klein_9b_i8x.ckpt, Strength: 1.0, Seed Mode: Scale Alike, Shift: 3.0, CLIP Skip: 2, LoRA Model: flux2_klein_9bvirtual_tryoff_lora_f16.ckpt, LoRA Weight: 1.0 {“c”:”tryoff extract the full outfit over a white background, product photography style. no human visible (the garments maintain their 3d form like an invisible mannequin)”,”clip_skip”:2,”lora”:[{“model”:”flux2_klein_9bvirtual_tryoff_lora_f16.ckpt”,”weight”:1}],”mask_blur”:2.5,”model”:”flux_2_klein_9b_i8x.ckpt”,”profile”:{“duration”:201.45126229166669,”timings”:[{“durations”:[3.8028770416666475],”name”:”text_encoded”},{“durations”:[1.2810680416666855],”name”:”controls_generated”},{“durations”:[0.76047574999995504,49.507866416666729,48.187477041666625,49.319349125000031,47.794078833333288],”name”:”sampling”},{“durations”:[0.79310533333341482],”name”:”image_decoded”}]},”sampler”:”DDIM Trailing”,”scale”:2.5,”seed”:3105463327,”seed_mode”:”Scale Alike”,”shift”:3,”size”:”448×768″,”steps”:4,”strength”:1,”uc”:””,”v2″:{“aestheticScore”:6,”batchCount”:1,”batchSize”:1,”causalInference”:0,”causalInferencePad”:0,”cfgZeroInitSteps”:0,”cfgZeroStar”:false,”clipSkip”:2,”clipWeight”:1,”compressionArtifacts”:”disabled”,”compressionArtifactsQuality”:43.100000000000001,”controls”:[],”cropLeft”:0,”cropTop”:0,”decodingTileHeight”:640,”decodingTileOverlap”:128,”decodingTileWidth”:640,”diffusionTileHeight”:1024,”diffusionTileOverlap”:128,”diffusionTileWidth”:1024,”fps”:5,”guidanceEmbed”:3.5,”guidanceScale”:2.5,”guidingFrameNoise”:0.02,”height”:768,”hiresFix”:false,”hiresFixHeight”:0,”hiresFixStrength”:0.69999999999999996,”hiresFixWidth”:0,”id”:0,”imageGuidanceScale”:1.5,”imagePriorSteps”:5,”loras”:[{“file”:”flux2_klein_9bvirtual_tryoff_lora_f16.ckpt”,”mode”:”all”,”weight”:1}],”maskBlur”:2.5,”maskBlurOutset”:0,”model”:”flux_2_klein_9b_i8x.ckpt”,”motionScale”:127,”negativeAestheticScore”:2.5,”negativeOriginalImageHeight”:512,”negativeOriginalImageWidth”:448,”negativePromptForImagePrior”:true,”numFrames”:14,”originalImageHeight”:768,”originalImageWidth”:448,”preserveOriginalAfterInpaint”:true,”refinerStart”:0.84999999999999998,”resolutionDependentShift”:false,”sampler”:16,”seed”:3105463327,”seedMode”:2,”separateClipL”:false,”separateOpenClipG”:false,”separateT5″:false,”sharpness”:0,”shift”:3,”speedUpWithGuidanceEmbed”:true,”stage2Guidance”:1,”stage2Shift”:1,”stage2Steps”:10,”startFrameGuidance”:1,”steps”:4,”stochasticSamplingGamma”:0.29999999999999999,”strength”:1,”t5TextEncoder”:true,”targetImageHeight”:768,”targetImageWidth”:448,”teaCache”:false,”teaCacheEnd”:-1,”teaCacheMaxSkipSteps”:3,”teaCacheStart”:5,”teaCacheThreshold”:0.29999999999999999,”tiledDecoding”:false,”tiledDiffusion”:false,”upscalerScaleFactor”:0,”width”:448,”zeroNegativePrompt”:false}}

7. 结果校验与定向优化

若残留人体/皮肤:提示词追加 completely remove body, no arms, no legs, product only,或提高 CFG 至 4.0 重试

若服装变形/褶皱失真:追加 maintain original folds, realistic fabric texture, no distortion,并适当提高 Steps

若背景不纯/有杂色:追加 pure white background, isolated product, alpha channel ready

若边缘模糊:生成后使用 Draw Things 内置「背景移除」或「智能抠图」工具二次精修

8. 导出与后期处理

生成满意结果后,导出为 PNG 格式(保留透明通道)或 JPG(白底商用)

可选:在 Photoshop / Affinity Photo 中微调光影、锐化边缘,或批量添加阴影/倒影提升电商质感按此顺序执行,即可稳定输出符合电商标准的纯净服装产品图,为后续 Try-On 换装、商品上架或素材库建设提供高质量资产。

特别提示:不只是可以脱衣服 可以脱戒指 手表 项链 手环 耳钉 等等 用提示词即可,也可以有更多的想象力,扩充应用场景,模型的能力和潜力目前而言,远远超出我们的应用场景,如果有更好玩的想法,欢迎跟帖留言。

-

2026-05-05 - 11:56 #131838

追光参与者基于 Draw Things 与 Klein 9B 的 Try-On(穿衣/换衣) 精确操作流程,按执行顺序编号,可直接对照复现:

1. 准备素材

准备两张高清图片:

Image 1:目标人物图(全身或半身,姿态清晰)

我特意使用了模糊的小图片

Image 2:目标服装图(平铺或上身图,背景尽量干净、主体完整)

测试用的模糊衣服

测试用的模糊裤子

2. 加载模型与环境

打开 Draw Things,在模型加载区选择 Klein 9B。若需更高贴合稳定性,可额外加载 Try-On LoRA(非必需,原生提示词方案已足够)。

3. 导入人物主图

在画布导入(通常标记为 Image 1 或 Base Image)中拖入或选择人物图。

4. 挂载服装参考图

在控制的创意板(Image Prompt / Reference Image / Image 2)中导入服装图。若需上下装分别替换,可继续添加 Image 3 等多个图片。

5. 配置核心参数

Resolution:768×1024 或 960×1280(符合人体比例),根据模特来调整,让画布完全覆盖模特

Steps:20~35(平衡速度与细节)

CFG Scale:1.0~5.0(超过5易导致服装结构扭曲)

Sampler:DDIM 或软件默认推荐采样器在不使用Tryon专用lora的情况下如果能得到较好的效果则不加,如果动作发生变形则添加 tryon lora。

6. 输入标准提示词

在正向 Prompt 框中精确输入:tryon [girl]. replace the outfit with [top pic2] and [bottom pic3] as shown in the reference images. the final image maintains the original framing and composition.(若使用 LoRA,末尾追加触发词如 )

7. 执行生成

检查参数与图片绑定无误后,点击生成。等待模型完成 4~35 步推理(视设备与 Steps 设置而定)。

Screenshot

8. 结果校验与定向微调

若服装变形:提示词追加 maintain original garment structure,或适当提高 Steps

若贴合生硬/悬浮:追加 correct body fitting, realistic physics, natural wrinkles

若图像对应错乱:明确标注编号(如 replace top in image 1 with top from image 2)

仍不理想:降低 CFG 至 2.0 左右重新生成,或切换至 LoRA 模式按此顺序执行,即可稳定输出高贴合度、物理合理的虚拟试穿结果。

-

该回复由

追光 于 8 分 前 修正。

追光 于 8 分 前 修正。

-

该回复由

-

2026-05-05 - 11:05 #131828

追光参与者Qwen image Edit 2511

Qwen Image Edit 2511 是专业级智能图像编辑模型,聚焦”精准理解、可控修改、高效迭代”三大核心能力。模型基于多模态对齐的扩散-Transformer 混合架构,支持自然语言指令驱动的局部重绘、对象替换、风格迁移、背景移除与光影重构,可精准识别用户意图中的空间关系与语义层级,实现像素级编辑精度。

在技术层面,2511 版本引入动态掩码生成与注意力引导机制,无需手动绘制选区即可完成复杂对象的智能定位与无损修改;同时支持多参考图融合与历史编辑回溯,确保创作过程灵活可控。模型经过海量图文对与编辑指令微调,在人脸一致性保持、文字渲染、材质还原等长尾场景表现显著提升,并内置物理规律约束模块,避免生成违背常识的视觉结果。

应用方面,Qwen Image Edit 2511 提供 REST API、SDK 及主流设计软件插件,支持企业私有化部署与内容安全合规检测,广泛适用于电商修图、广告创意、影视后期及自媒体内容生产。相较于通用文生图模型,该版本以”编辑”为核心定位,大幅降低专业修图门槛,让创作者通过简单指令即可完成复杂视觉调整,真正实现”所想即所得”的智能工作流。

个人感受:这个是目前开源图形编辑中无可替代的模型与Flux2-klein-9B都有着统治级的能力,在人物一致性、空间、人物手、脚方面有着出色的表现,同时可以实现命令即可操作复杂的图像编辑。而Flux2-klein-9B在产品编辑方面出众,俩者结合灵活使用,可以获得更加宽阔的处理能力和质量。

-

2026-05-05 - 10:57 #131823

追光参与者Flux2-klein-9B

FLUX.2 [klein] 9B 是由 Black Forest Labs 推出的旗舰级轻量化文生图模型,专为实时图像生成与编辑场景打造。模型基于 90 亿参数的整流流(Rectified Flow)Transformer 架构,集成 8B Qwen3 文本编码器实现精准语义理解,并通过步骤蒸馏技术将推理压缩至仅需 4 步,达成亚秒级端到端生成速度 。

A cinematic portrait of a [20-year-old Asian woman with short, black robotic-styled hair, looking directly with an intense gaze], wearing a [high-tech, armored techwear jacket with integrated blue LED lights and strap details, over a black mesh turtleneck]. She is standing in a [rain-slicked, neon-lit futuristic Tokyo street at night, reflection of holographic ads in a puddle], shot on 35mm lens, realistic, volumetric lighting.

Steps: 4, Sampler: DDIM Trailing, Guidance Scale: 1.0, Seed: 2189345100, Size: 768×768, Model: flux_2_klein_9b_i8x.ckpt, Strength: 1.0, Seed Mode: Scale Alike, Shift: 3.0, CLIP Skip: 2 {“c”:”A cinematic portrait of a [20-year-old Asian woman with short, black robotic-styled hair, looking directly with an intense gaze], wearing a [high-tech, armored techwear jacket with integrated blue LED lights and strap details, over a black mesh turtleneck]. She is standing in a [rain-slicked, neon-lit futuristic Tokyo street at night, reflection of holographic ads in a puddle], shot on 35mm lens, realistic, volumetric lighting.”,”clip_skip”:2,”mask_blur”:2.5,”model”:”flux_2_klein_9b_i8x.ckpt”,”profile”:{“duration”:49.741630291664478,”timings”:[{“durations”:[4.147645166664006],”name”:”text_encoded”},{“durations”:[0.004927791665977566],”name”:”controls_generated”},{“durations”:[0.31301587500274763,11.253990499997599,10.579278375000285,11.137198791668197,10.936794375000318],”name”:”sampling”},{“durations”:[1.3637274166649149],”name”:”image_decoded”}]},”sampler”:”DDIM Trailing”,”scale”:1,”seed”:2189345100,”seed_mode”:”Scale Alike”,”shift”:3,”size”:”768×768″,”steps”:4,”strength”:1,”uc”:””,”v2″:{“aestheticScore”:6,”batchCount”:1,”batchSize”:1,”causalInference”:0,”causalInferencePad”:0,”cfgZeroInitSteps”:0,”cfgZeroStar”:false,”clipSkip”:2,”clipWeight”:1,”compressionArtifacts”:”disabled”,”compressionArtifactsQuality”:43.100000000000001,”controls”:[],”cropLeft”:0,”cropTop”:0,”decodingTileHeight”:640,”decodingTileOverlap”:128,”decodingTileWidth”:640,”diffusionTileHeight”:1024,”diffusionTileOverlap”:128,”diffusionTileWidth”:1024,”fps”:5,”guidanceEmbed”:3.5,”guidanceScale”:1,”guidingFrameNoise”:0.02,”height”:768,”hiresFix”:false,”hiresFixHeight”:640,”hiresFixStrength”:0.69999999999999996,”hiresFixWidth”:640,”id”:0,”imageGuidanceScale”:1.5,”imagePriorSteps”:5,”loras”:[],”maskBlur”:2.5,”maskBlurOutset”:0,”model”:”flux_2_klein_9b_i8x.ckpt”,”motionScale”:127,”negativeAestheticScore”:2.5,”negativeOriginalImageHeight”:512,”negativeOriginalImageWidth”:512,”negativePromptForImagePrior”:true,”numFrames”:14,”originalImageHeight”:768,”originalImageWidth”:768,”preserveOriginalAfterInpaint”:true,”refinerStart”:0.84999999999999998,”resolutionDependentShift”:false,”sampler”:16,”seed”:2189345100,”seedMode”:2,”separateClipL”:false,”separateOpenClipG”:false,”separateT5″:false,”sharpness”:0,”shift”:3,”speedUpWithGuidanceEmbed”:true,”stage2Guidance”:1,”stage2Shift”:1,”stage2Steps”:10,”startFrameGuidance”:1,”steps”:4,”stochasticSamplingGamma”:0.29999999999999999,”strength”:1,”t5TextEncoder”:true,”targetImageHeight”:768,”targetImageWidth”:768,”teaCache”:false,”teaCacheEnd”:-1,”teaCacheMaxSkipSteps”:3,”teaCacheStart”:5,”teaCacheThreshold”:0.29999999999999999,”tiledDecoding”:false,”tiledDiffusion”:false,”upscalerScaleFactor”:0,”width”:768,”zeroNegativePrompt”:false}}在核心能力上,FLUX.2 [klein] 9B 统一了文生图、单图编辑与多图参考融合三大任务,支持复杂提示词解析、多主体空间关系控制及高保真细节还原。相比前代及同尺寸模型,其在光影渲染、文字生成准确性与提示词遵循度方面表现更优,同时保持对消费级硬件的友好性(约 29GB 显存,RTX 4090 可运行)。

应用场景涵盖电商素材快速打样、游戏资产迭代、社交媒体内容创作及交互式视觉工具开发。模型提供非商业开源权重与商业 API 双路径,Diffusers、ComfyUI 等主流框架集成,并内置内容安全过滤与 C2PA 数字水印机制,保障合规商用 。作为”速度 – 质量”帕累托前沿的代表,FLUX.2 [klein] 9B 以紧凑架构重新定义了实时视觉生成的效率标准。

个人在Mac M1 pro上draw things中的实测:生成768*768图片的时间为:49.74秒

配置参数

{"upscaler":"","faceRestoration":"","shift":3,"batchCount":1,"seed":2189345100,"maskBlur":2.5,"sharpness":0,"preserveOriginalAfterInpaint":true,"sampler":16,"strength":1,"height":768,"loras":[],"refinerModel":"","steps":4,"resolutionDependentShift":false,"width":768,"tiledDecoding":false,"maskBlurOutset":0,"tiledDiffusion":false,"hiresFix":false,"seedMode":2,"controls":[],"batchSize":1,"cfgZeroInitSteps":0,"cfgZeroStar":false,"guidanceScale":1,"model":"flux_2_klein_9b_i8x.ckpt","causalInferencePad":0} -

2026-05-05 - 10:36 #131815

追光参与者Z Image Turbo

一款面向高效视觉创作场景的新一代 AI 图像生成模型。正如其名,“Turbo”代表了其在推理速度与响应效率上的极致突破。模型采用轻量化扩散架构与动态步长蒸馏技术,在保持高保真画质的同时,将生成耗时大幅压缩,真正实现“所写即所见”的实时创作体验。

A cinematic portrait of a [20-year-old Asian woman with short, black robotic-styled hair, looking directly with an intense gaze], wearing a [high-tech, armored techwear jacket with integrated blue LED lights and strap details, over a black mesh turtleneck]. She is standing in a [rain-slicked, neon-lit futuristic Tokyo street at night, reflection of holographic ads in a puddle], shot on 35mm lens, realistic, volumetric lighting.

Steps: 4, Sampler: UniPC Trailing, Guidance Scale: 1.0, Seed: 3629999393, Size: 768×768, Model: z_image_turbo_1.0_i8x.ckpt, Strength: 1.0, Seed Mode: Scale Alike, Shift: 3.0 {“c”:”A cinematic portrait of a [20-year-old Asian woman with short, black robotic-styled hair, looking directly with an intense gaze], wearing a [high-tech, armored techwear jacket with integrated blue LED lights and strap details, over a black mesh turtleneck]. She is standing in a [rain-slicked, neon-lit futuristic Tokyo street at night, reflection of holographic ads in a puddle], shot on 35mm lens, realistic, volumetric lighting.”,”mask_blur”:1.5,”model”:”z_image_turbo_1.0_i8x.ckpt”,”profile”:{“duration”:35.73231183333337,”timings”:[{“durations”:[2.6575174999998126],”name”:”text_encoded”},{“durations”:[0.0025598749998607673],”name”:”controls_generated”},{“durations”:[0.58710766666627023,7.8125014166653273,7.6204510416682751,7.8706594583345577,7.7509831249990384],”name”:”sampling”},{“durations”:[1.426829166666721],”name”:”image_decoded”}]},”sampler”:”UniPC Trailing”,”scale”:1,”seed”:3629999393,”seed_mode”:”Scale Alike”,”shift”:3,”size”:”768×768″,”steps”:4,”strength”:1,”uc”:””,”v2″:{“aestheticScore”:6,”batchCount”:1,”batchSize”:1,”causalInference”:0,”causalInferencePad”:0,”cfgZeroInitSteps”:0,”cfgZeroStar”:false,”clipSkip”:1,”clipWeight”:1,”compressionArtifacts”:”disabled”,”compressionArtifactsQuality”:43.100000000000001,”controls”:[],”cropLeft”:0,”cropTop”:0,”decodingTileHeight”:640,”decodingTileOverlap”:128,”decodingTileWidth”:640,”diffusionTileHeight”:1024,”diffusionTileOverlap”:128,”diffusionTileWidth”:1024,”fps”:5,”guidanceEmbed”:3.5,”guidanceScale”:1,”guidingFrameNoise”:0.02,”height”:768,”hiresFix”:false,”hiresFixHeight”:448,”hiresFixStrength”:0.69999999999999996,”hiresFixWidth”:448,”id”:0,”imageGuidanceScale”:1.5,”imagePriorSteps”:5,”loras”:[],”maskBlur”:1.5,”maskBlurOutset”:0,”model”:”z_image_turbo_1.0_i8x.ckpt”,”motionScale”:127,”negativeAestheticScore”:2.5,”negativeOriginalImageHeight”:0,”negativeOriginalImageWidth”:0,”negativePromptForImagePrior”:true,”numFrames”:14,”originalImageHeight”:0,”originalImageWidth”:0,”preserveOriginalAfterInpaint”:true,”refinerStart”:0.84999999999999998,”resolutionDependentShift”:false,”sampler”:17,”seed”:3629999393,”seedMode”:2,”separateClipL”:false,”separateOpenClipG”:false,”separateT5″:false,”sharpness”:0,”shift”:3,”speedUpWithGuidanceEmbed”:true,”stage2Guidance”:1,”stage2Shift”:1,”stage2Steps”:10,”startFrameGuidance”:1,”steps”:4,”stochasticSamplingGamma”:0.29999999999999999,”strength”:1,”t5TextEncoder”:true,”targetImageHeight”:0,”targetImageWidth”:0,”teaCache”:false,”teaCacheEnd”:-1,”teaCacheMaxSkipSteps”:3,”teaCacheStart”:5,”teaCacheThreshold”:0.20000000000000001,”tiledDecoding”:false,”tiledDiffusion”:false,”upscalerScaleFactor”:0,”width”:768,”zeroNegativePrompt”:false}}在核心能力上,Z Image Turbo 强化了复杂语义解析与空间逻辑推理,支持多主体精准控制、物理光影模拟及超分辨率无损输出。内置的智能编辑引擎可完成局部重绘、风格迁移与构图自适应调整,并提供分层掩码与提示词权重调节功能,满足专业设计师的精细化需求。模型经过大规模多模态数据对齐训练,对长尾场景、抽象概念及跨文化视觉元素的还原度显著提升。

个人实测:在M1 pro的Draw things中使用 Z image turbo的默认配置,生成768*768高质量图片的时间为 61秒左右,生成质量非常稳定可靠,也是我创作制作中最主要的模型之一。

-

2026-05-05 - 10:21 #131813

追光参与者Qwen image 2512

Qwen Image 2512 是阿里巴巴通义千问团队推出的新一代图像生成与多模态视觉模型。该版本聚焦高精度视觉创作与深度语义理解,支持文生图。基于大规模图文对齐数据与扩散-Transformer混合架构,模型在细节还原、光影渲染、构图逻辑及多主体交互方面实现显著突破,能精准遵循复杂提示词,生成符合物理规律与美学标准的高质量图像。

A cinematic portrait of a [20-year-old Asian woman with short, black robotic-styled hair, looking directly with an intense gaze], wearing a [high-tech, armored techwear jacket with integrated blue LED lights and strap details, over a black mesh turtleneck]. She is standing in a [rain-slicked, neon-lit futuristic Tokyo street at night, reflection of holographic ads in a puddle], shot on 35mm lens, realistic, volumetric lighting.

Steps: 2, Sampler: DPM++ 2M Trailing, Guidance Scale: 1.0, Seed: 3882193997, Size: 768×768, Model: qwen_image_2512_i8x.ckpt, Strength: 1.0, Seed Mode: Scale Alike, LoRA Model: wuli_2512_2steps_lora_q8p.ckpt, LoRA Weight: 1.0 {“c”:”A cinematic portrait of a [20-year-old Asian woman with short, black robotic-styled hair, looking directly with an intense gaze], wearing a [high-tech, armored techwear jacket with integrated blue LED lights and strap details, over a black mesh turtleneck]. She is standing in a [rain-slicked, neon-lit futuristic Tokyo street at night, reflection of holographic ads in a puddle], shot on 35mm lens, realistic, volumetric lighting.”,”lora”:[{“model”:”wuli_2512_2steps_lora_q8p.ckpt”,”weight”:1}],”mask_blur”:1.5,”model”:”qwen_image_2512_i8x.ckpt”,”profile”:{“duration”:35.161870083335089,”timings”:[{“durations”:[4.4902754166687373],”name”:”text_encoded”},{“durations”:[0.002849958331353264],”name”:”controls_generated”},{“durations”:[4.2678395416696731,14.533455374999903,11.108817874999659],”name”:”sampling”},{“durations”:[0.75542641666470445],”name”:”image_decoded”}]},”sampler”:”DPM++ 2M Trailing”,”scale”:1,”seed”:3882193997,”seed_mode”:”Scale Alike”,”size”:”768×768″,”steps”:2,”strength”:1,”uc”:””,”v2″:{“aestheticScore”:6,”batchCount”:1,”batchSize”:1,”causalInference”:0,”causalInferencePad”:0,”cfgZeroInitSteps”:0,”cfgZeroStar”:false,”clipSkip”:1,”clipWeight”:1,”compressionArtifacts”:”disabled”,”compressionArtifactsQuality”:43.100000000000001,”controls”:[],”cropLeft”:0,”cropTop”:0,”decodingTileHeight”:640,”decodingTileOverlap”:128,”decodingTileWidth”:640,”diffusionTileHeight”:1024,”diffusionTileOverlap”:128,”diffusionTileWidth”:1024,”fps”:5,”guidanceEmbed”:3.5,”guidanceScale”:1,”guidingFrameNoise”:0.02,”height”:768,”hiresFix”:false,”hiresFixHeight”:640,”hiresFixStrength”:0.69999999999999996,”hiresFixWidth”:640,”id”:0,”imageGuidanceScale”:1.5,”imagePriorSteps”:5,”loras”:[{“file”:”wuli_2512_2steps_lora_q8p.ckpt”,”mode”:”all”,”weight”:1}],”maskBlur”:1.5,”maskBlurOutset”:0,”model”:”qwen_image_2512_i8x.ckpt”,”motionScale”:127,”negativeAestheticScore”:2.5,”negativeOriginalImageHeight”:512,”negativeOriginalImageWidth”:512,”negativePromptForImagePrior”:true,”numFrames”:14,”originalImageHeight”:768,”originalImageWidth”:576,”preserveOriginalAfterInpaint”:true,”refinerStart”:0.84999999999999998,”resolutionDependentShift”:false,”sampler”:15,”seed”:3882193997,”seedMode”:2,”separateClipL”:false,”separateOpenClipG”:false,”separateT5″:false,”sharpness”:0,”shift”:1,”speedUpWithGuidanceEmbed”:true,”stage2Guidance”:1,”stage2Shift”:1,”stage2Steps”:10,”startFrameGuidance”:1,”steps”:2,”stochasticSamplingGamma”:0.29999999999999999,”strength”:1,”t5TextEncoder”:true,”targetImageHeight”:768,”targetImageWidth”:576,”teaCache”:false,”teaCacheEnd”:-1,”teaCacheMaxSkipSteps”:3,”teaCacheStart”:5,”teaCacheThreshold”:0.20000000000000001,”tiledDecoding”:false,”tiledDiffusion”:false,”upscalerScaleFactor”:0,”width”:768,”zeroNegativePrompt”:false}}广泛适用于电商设计、广告创意、游戏资产、教育课件等内容生产场景。相较于前代,2512版本在推理速度、显存优化与多语言支持上全面升级,并深度打通与Qwen大语言模型的协同链路,实现“指令理解—图像生成—二次迭代”的无缝工作流。

个人测试实际结果:使用了Wuli 2Step加速lora后俩步即可生成高质量图像,生成速度是Z image Turbo的俩倍,是Flux2-klein-9B得1.5倍,在M1 pro上面生成768*768的高质量图片仅31秒左右。

质量对比:除了速度快以外,其生成图片的质量非常接近于Google的banana,稍微逊色于Z image,但对亚洲地区的人物有更优秀的表现。

我个人经过反复优化和调整的参数配置,可以复制直接粘贴使用

{"seedMode":2,"controls":[],"resolutionDependentShift":false,"preserveOriginalAfterInpaint":true,"sharpness":0,"height":768,"loras":[{"mode":"all","file":"wuli_2512_2steps_lora_q8p.ckpt","weight":1}],"upscaler":"","steps":2,"cfgZeroInitSteps":0,"faceRestoration":"","model":"qwen_image_2512_i8x.ckpt","hiresFix":false,"causalInferencePad":0,"cfgZeroStar":false,"tiledDecoding":false,"shift":1,"width":768,"strength":1,"maskBlur":1.5,"seed":4107639530,"batchCount":1,"batchSize":1,"maskBlurOutset":0,"refinerModel":"","tiledDiffusion":false,"sampler":15,"guidanceScale":1} -

2026-05-04 - 21:55 #131800

追光参与者SeedVR模型

SeedVR2 是 Seed团队推出的顶级视频与图像修复(Video/Image Restoration)模型,是 2026 年影像修复领域的行业标杆。SeedVR2 是一款基于 Diffusion Transformer (DiT) 架构的通用影像修复大模型。它专注于解决视频与图像在现实世界中遇到的各种退化问题(如:画质模糊、噪点、低分辨率、旧胶片划痕等)。相比于初代 SeedVR,它通过“对抗性蒸馏(Adversarial Distillation)”技术,极大地缩短了推理时间。

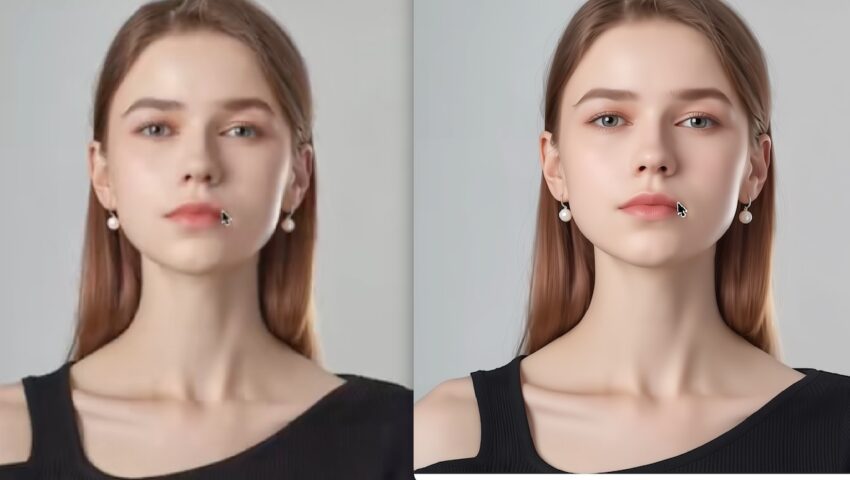

左侧为3B,右侧为7B

7B 版本的核心跨越(相比 3B)

核心功能与亮点

一步推理(One-Step Inference): 传统的扩散修复模型通常需要 20-50 步才能出图,而 SeedVR2 仅需 1 步 即可完成高清修复。这使得它在视频处理这种“巨量计算”场景下,速度提升了数十倍极致画质提升: 能够将低清(如 480p 或更低)的旧素材瞬间拉升至 4K/8K 级别,同时精准补全皮肤毛孔、织物纹理等精细细节,而非简单的平滑模糊。

任意分辨率支持: 具备“分辨率不敏感”特性,支持非标准比例的影像修复。

时域一致性: 针对视频修复,它能确保前后帧之间的细节、色彩、光影完美衔接,彻底告别“闪烁”感。

3. 性能参数记录

使用Z Image turbo生成,Step:2,使其不清晰

环境:应用平台Mac M1 pro芯片,Draw things平台

SeedVR 3B 8bits:3B 的参数量使其对 VRAM(显存)的要求极低,在 8GB 显存环境下即可流畅运行,是笔记本用户的“性能救星”,展现出了惊人的运行效率,其修复的画面锐利、清晰,效果惊艳,普遍会导致眼睛偏蓝,但对细节和一致性的保持相对7B明显逊色,但速度快。

3B版本:Step1:15秒,Step2:37.23秒

SeedVR 7B 8bits:拥有7B参数量,处理速度慢,但对细节的还原程度远超3B,同时能非常完善的保持原始画面的细节,处理不是那么猛烈,但细节丰富、结果和原始图片拥有超高的一致性,适合对一致性要求较高的用户。

7B版本:Step1:30秒;Step2:54.48秒

语义理解深度: 在修复人脸时,7B 版本能识别出极其细微的情绪纹路和虹膜细节;在修复自然景观时,它能区分不同植物的叶片质感,而非统一“糊”成绿色。

光影重构能力: 它具备更强的全局光照预测能力,不仅能提升画质,还能修正原图中由于拍摄器材限制导致的动态范围不足(HDR 模拟效果)。

极端修复(Extreme Inpainting): 对于大面积缺失或损坏的素材,7B 的补全逻辑更符合人类视觉常识,能生成极具欺骗性的真实细节。

-

2026-05-02 - 17:46 #131777

追光参与者最近学习了Draw Things各种流程的使用,学习玩上方的cli语法与部署方法后,试着用Draw things cli的方式来执行流程,速度比图形界面快10-30%,根据上方语法写了这些测试的具体使用案例:我的模型路径在:/Users/xbaby/Documents/AiModel/DrawThings,我想要将成品输出到:/Users/xbaby/Pictures/dt_/fast_z.png

使用Z image生成图片

draw-things-cli generate \ --models-dir "/Users/xbaby/Documents/AiModel/DrawThings" \ --model "z_image_turbo_1.0_i8x.ckpt" \ --prompt "a futuristic cyberpunk city, bright neon lights, cinematic lighting" \ --steps 4 \ --guidance-scale 1.0 \ --width 512 \ --height 512 \ --output "/Users/xbaby/Pictures/dt_/fast_z.png

模糊照片修复重新绘制:

draw-things-cli generate \ --models-dir "/Users/xbaby/Documents/AiModel/DrawThings" \ --model "qwen_image_edit_2511_i8x.ckpt" \ --image "/Users/xbaby/Desktop/Screenshot 2026-05-02 at 09.33.21.png" \ --prompt "Enhance image quality" \ --steps 4 \ --cfg 1.0 \ --seed 4253394538 \ --strength 1.0 \ --width 512 \ --height 512 \ --config-json '{"loras":[{"file":"qwen_image_edit_2511_lightning_4_step_v1.0_lora_f16.ckpt","weight":1},{"file":"qwen_edit_enhance_lora_f16.ckpt","weight":1}]}' \ --output "/Users/xbaby/Pictures/dt_/enhanced_screenshot.png"

模糊照片修复脚本

draw-things-cli generate \ --models-dir "/Users/xbaby/Documents/AiModel/DrawThings" \ --model "qwen_image_edit_2511_i8x.ckpt" \ --image "/Users/xbaby/Desktop/Screenshot 2026-05-02 at 09.33.21.png" \ --prompt "Enhance image quality, unblur, high detail" \ --steps 4 \ --cfg 1.0 \ --seed 4253394538 \ --width 1024 \ --height 1024 \ --strength 1.0 \ --output "/Users/xbaby/Pictures/dt_/qwen_1024_enhanced.png" \ --config-json '{ "shift": 2.6555896, "tiledDecoding": true, "decodingTileWidth": 640, "decodingTileHeight": 640, "decodingTileOverlap": 128, "sampler": 12, "loras": [ { "file": "qwen_image_edit_2511_lightning_4_step_v1.0_lora_f16.ckpt", "weight": 1.0, "mode": "base" }, { "file": "prithivmlmods_qwen_image_edit_2511_unblur_upscale_lora_f16.ckpt", "weight": 1.0, "mode": "all" } ] }'

换脸脚本:在终端中执行,分别将图片的路径拖放到窗口,然后按下enter执行

1、新建一个文件:dt_faceswap.sh

2、在文件内输入:

#!/bin/zsh # --- 1. 基础配置 --- MODELS_DIR="/Users/xbaby/Documents/AiModel/DrawThings" MODEL_NAME="flux_2_klein_9b_i8x.ckpt" # 默认输出路径(也可以改为交互式输入) OUTPUT_PATH="/Users/xbaby/Pictures/dt_/faceswap_$(date +%s).png" echo "------------------------------------------------" echo "🎨 Flux 9B [i8x] 命令行换脸工具" echo "提示:你可以直接将图片文件拖入此窗口来获取路径" echo "------------------------------------------------" # --- 2. 交互式获取路径 --- # 获取底图路径 printf "🖼 请输入/拖入【底图】路径 (Base Image): " read BASE_IMAGE # 去掉拖拽可能产生的引号和末尾空格 BASE_IMAGE="${BASE_IMAGE%\'}" BASE_IMAGE="${BASE_IMAGE#\'}" BASE_IMAGE="${BASE_IMAGE%\"}" BASE_IMAGE="${BASE_IMAGE#\"}" BASE_IMAGE=$(echo $BASE_IMAGE | xargs) # 获取人脸图路径 printf "🎭 请输入/拖入【参考脸】路径 (Face Image): " read FACE_IMAGE FACE_IMAGE="${FACE_IMAGE%\'}" FACE_IMAGE="${FACE_IMAGE#\'}" FACE_IMAGE="${FACE_IMAGE%\"}" FACE_IMAGE="${FACE_IMAGE#\"}" FACE_IMAGE=$(echo $FACE_IMAGE | xargs) # 检查文件是否存在 if [[ ! -f "$BASE_IMAGE" ]]; then echo "❌ 错误: 找不到底图文件,请检查路径。" exit 1 fi if [[ ! -f "$FACE_IMAGE" ]]; then echo "❌ 错误: 找不到人脸参考图,请检查路径。" exit 1 fi # --- 3. 构造 JSON 配置 --- JSON_CONFIG=$(cat <<JSON { "shift": 2.6555896, "sampler": 16, "seedMode": 2, "resolutionDependentShift": true, "preserveOriginalAfterInpaint": true, "loras": [] } JSON ) CONFIG_FILE="/tmp/dt_config.json" echo "$JSON_CONFIG" > "$CONFIG_FILE" echo "\n🚀 启动生成任务..." echo "📸 底图: $BASE_IMAGE" echo "🧬 参考脸: $FACE_IMAGE" echo "⏳ 请稍候,Flux 9B 模型加载中...\n" # --- 4. 执行生成 --- draw-things-cli generate \ --models-dir "$MODELS_DIR" \ --model "$MODEL_NAME" \ --image "$BASE_IMAGE" \ --prompt "A professional photo, replace the face with the features from $FACE_IMAGE, maintain consistent lighting and skin texture, high detail" \ --steps 4 \ --cfg 1.0 \ --strength 1.0 \ --config-file "$CONFIG_FILE" \ --output "$OUTPUT_PATH" # 清理 rm "$CONFIG_FILE" if [ $? -eq 0 ]; then echo "\n✅ 任务成功!" echo "📂 结果保存在: $OUTPUT_PATH" # 自动在 Finder 中预览结果 open "$OUTPUT_PATH" else echo "\n❌ 任务失败,请检查参数或显存占用。" fi EOF3、赋予刚刚创建的文件执行权限:

chmod +x dt_faceswap.sh -

2026-04-30 - 21:52 #131722

追光参与者一套“跨模型通用抠图/分离编辑 Prompt”(适配:Qwen Image Edit / FLUX / SDXL / 以及大多数图像编辑模型)。核心思路是:不依赖模型特性、用“约束 + 结构化语义”、避免模型各自乱发挥

🧴 1. 产品抠图(通用工业级)

Prompt

a clean subject cutout, isolated object, centered composition, studio lighting, high detail, sharp edges, perfect alpha matte, no background, transparent background, subject only, professional product photography style, ultra high resolution, soft shadow removed, edge preserved, no artifactscends age.负面提示词 Negative Prompt

background, scenery, environment, floor, wall, text, watermark, logo, blur, noise, low quality, jpeg artifacts, shadow on background, messy edges, cut off parts, extra objects

📦 2. 物品抠图(通用对象 / 工具 / 道具)

Prompt

Edit the image to isolate the main object. Keep only the single subject, centered composition. Remove background completely. Maintain accurate shape, material texture, and details. Clean edge cutout with high precision. Studio-like neutral lighting. Result should look like a clean object asset with transparent or plain background. No environment or additional elements.负面提示词 Negative Prompt

background, scene, environment, table, floor, multiple objects, text, watermark, blur, noise, distortion, overlapping objects, artifacts, messy edges, halo, low quality

🧑 3. 人像抠图(发丝级通用版)

Prompt

Edit the image to isolate the person as the only subject. Keep full human figure or portrait centered. Remove background completely. Preserve natural facial features, skin texture, and fine hair strands. Ensure accurate edge separation, especially hair details. Studio lighting look. High fidelity cutout suitable for compositing. Transparent or neutral background. No environment or background elements.负面提示词 Negative Prompt

background, room, outdoor, scenery, crowd, text, watermark, logo, blur, motion blur, overexposure, underexposure, cut off body, messy hair edges, artifacts, halo, noise, low quality

通用增强词(所有模型都可加)

如果想更稳,可以在 Prompt 末尾追加这一段:

Ensure perfect subject isolation, clean alpha matte, edge-preserving cutout, no background spill, high precision segmentation quality -

2026-04-30 - 17:18 #131717

追光参与者今天测试了 Qwen Image Edit 2509/2511的换脸方法

新的流程是:首先将原图放在画布上,把需要替换的人脸作为第二张图放入创意板。直接用提示词替换通常会失败,因为模型会强行保持原图人脸一致性。因此关键步骤是“消除原脸干扰”。具体做法是在画布中使用画笔工具(不是橡皮),将原图人脸区域涂成实心颜色,例如绿色或棕色。

Screenshot

随后在提示词中明确指令,如“将图1绿色区域替换为图2的人脸”。这样模型会忽略原人脸特征,从创意板中提取目标人脸并完成融合。测试中英文提示词效果更稳定,推荐优先使用。

Draw Things替换人脸流程(Face Swap)使用flux2 和Qwen image edit换脸的俩种方法

分辨率方面建议使用768×768以提升生成速度。整体流程:导入原图 → 导入替换脸 → 涂抹人脸区域 → 输入提示词 → 生成。整个过程无需额外软件,操作连贯且效果自然,是目前较高效稳定的换脸方案之一。

-

2026-04-29 - 11:41 #131644

追光参与者使用SD ControlNet pose精准控制AI表情、手指与姿势:进阶工作流

第一步:提取完整骨架(含脸/手)

访问「哩布哩布AI」网站,上传参考图。ControlNet 类型选 OpenPose,预处理器务必选择 OpenPose Full。系统将输出包含身体坐标、手指关键点与面部五官点位的完整骨架图。这是实现同步控制的数据基础。第二步:导入 DrawThings 图层

将生成的骨架图拖入 DT 的 Pose 图层,或放入draw things的控制当中的“自定义图层”(推荐,编辑权限更高)。此时画布已被全身坐标网格覆盖,AI 的生成范围被初步框定。

第三步:手动精修(决胜关键)

AI 自动提取的骨架常有偏差,必须用 DT 内置工具修正:

画笔:补全缺失的指节与面部关键点

橡皮擦:删除错位节点、多余手指或干扰线

拖拽节点:微调手指弯曲角度、嘴角弧度与眼睛朝向

缩放工具:修正骨架与画布的比例关系

这一步直接决定最终同步精度,切勿跳过。

第四步:模型选择与生成设置

SD1.5 Pose对面部关键点与手指结构的权重敏感度极高,能稳定识别微表情与指节形态;而 SDXL/Kolors pose仅能识别动作,而不能处理手指和表情细节。

模型与控制网络的配置:

{"strength":1,"sharpness":0,"height":768,"seedMode":2,"shift":1,"faceRestoration":"","batchSize":1,"controls":[{"globalAveragePooling":false,"weight":1,"inputOverride":"","file":"controlnet_openpose_1.x_v1.1_f16.ckpt","guidanceStart":0,"noPrompt":false,"guidanceEnd":1,"targetBlocks":[],"controlImportance":"balanced","downSamplingRate":1}],"hiresFix":false,"causalInferencePad":0,"loras":[{"mode":"all","file":"tcd_sd_v1.5_lora_f16.ckpt","weight":0.01}],"seed":2234305494,"sampler":12,"preserveOriginalAfterInpaint":true,"steps":16,"maskBlur":2.5,"clipSkip":1,"cfgZeroStar":false,"tiledDecoding":false,"width":768,"batchCount":3,"tiledDiffusion":false,"upscaler":"","model":"realistic_vision_v5.1_f16.ckpt","refinerModel":"","guidanceScale":5,"cfgZeroInitSteps":0,"maskBlurOutset":0}控制逻辑拆解

姿势:由全身骨架坐标强约束

手指:依赖 SD1.5 的局部注意力机制+手动补点,可精准控制握拳/张开/手势

表情:靠面部关键点网格+低 CFG 配合,实现微笑/张嘴/视线同步

全骨架定框架,手动修细节,SD1.5 抓微结构。按此四步流程,即可彻底告别肢体漂移与表情随机,实现姿态、手势与五官的稳定同步。需 ComfyUI 节点对照或自动化批量管线可随时提供。

-

2026-04-23 - 14:39 #131513

追光参与者六、Draw Things物体移除功能(Object Removal)流程

本工作流利用 Flux Fill 强大的图像填充能力结合专用 LoRA,实现对画面中多余物体的无痕移除与背景补全。

1. 核心模型配置

主模型: flux1filldevq8p.ckpt

专用 LoRA: Object Removal Flux Fill v2(JSON 中对应文件为 fluxoutpaintloraloraf16.ckpt)

LoRA 触发提示词: Fill the green spaces according to the image,

2. 操作步骤

1. 图片导入: 将包含想要移除物体的图片导入到 Draw Things 画布中。

2. 局部擦除: 在画布中使用 橡皮擦工具,将需要移除的物体区域进行涂抹擦除。

3. 提示词输入: 在提示词框中输入触发词 Fill the green spaces according to the image,。

4. 执行任务: 点击生成,系统将根据周围环境自动补全被擦除的区域,实现物体移除。

3. 完整工作流配置 (JSON)

注意: 此配置采用了 Flux Fill 专用采样器与参数设置,确保背景补全的自然度。

{"seed":1295966564,"batchCount":1,"speedUpWithGuidanceEmbed":true,"height":1024,"steps":28,"causalInferencePad":0,"sampler":10,"model":"flux_1_fill_dev_q8p.ckpt","separateClipL":false,"cfgZeroStar":false,"batchSize":1,"maskBlurOutset":0,"strength":1,"guidanceScale":50,"cfgZeroInitSteps":0,"upscaler":"","faceRestoration":"","clipSkip":2,"tiledDecoding":false,"tiledDiffusion":false,"shift":1,"zeroNegativePrompt":false,"width":1024,"maskBlur":2.5,"loras":[{"mode":"all","file":"flux_outpaint_lora_lora_f16.ckpt","weight":0.59999999999999998}],"resolutionDependentShift":true,"seedMode":2,"controls":[],"hiresFix":false,"sharpness":0,"teaCache":false,"refinerModel":"","preserveOriginalAfterInpaint":true}

操作技巧

擦除范围: 擦除时建议稍微超出物体边缘一点点,这样模型在计算背景融合时会更加自然,避免留下边缘痕迹。一键导入: 复制上方 JSON 代码,在 Draw Things 软件左上角点击三个点 …,选择 “Paste Settings”(粘贴配置) 即可瞬间完成所有复杂参数的设置。

备注:2026年更新,在最新版本的Fluxkelein2以及 Qwen image edit 2011模型中,已经可以直接使用提示词来移除物体。

-

2026-04-23 - 14:35 #131510

追光参与者五、从肖像到全身绘图

本工作流利用 Qwen 架构的图像编辑模型,结合人脸保持 LoRA,实现仅需一张面部参考图,即可生成指定人物的全身、场景照片。

1. 操作流程

1.1 放置参考脸: 在 ControlNet 的 Moodboard 单元中,放入目标人脸图片(图2)。

1.2 生成目标图像: 在画布内,直接输入你想要生成的人物全身描述、着装、姿势及场景提示词。软件将自动提取 Moodboard 中的面部特征进行生成。

2. 完整工作流配置 (JSON)

配置方案:M4/M5 芯片 M 系列 Mac/iPad 高效生成

注意: 此配置使用了 4 步加速 LoRA,并精确调配了人脸保持 LoRA 的权重(0.6),以平衡面部相似度与新场景的融合度。{"cfgZeroInitSteps":0,"tiledDiffusion":false,"maskBlur":1.5,"sampler":15,"causalInferencePad":0,"model":"qwen_image_edit_2509_q6p.ckpt","cfgZeroStar":false,"guidanceScale":2.7000000000000002,"strength":0.69999999999999996,"maskBlurOutset":0,"batchCount":1,"controls":[],"batchSize":1,"hiresFix":false,"seed":1295966564,"loras":[{"mode":"all","file":"qwen_image_edit_2509_lightning_4_step_v1.0_lora_f16.ckpt","weight":0.59999999999999998},{"mode":"all","file":"qwen_image_edit_face2p_lora_f16.ckpt","weight":0.59999999999999998}],"refinerModel":"","steps":4,"width":1024,"preserveOriginalAfterInpaint":true,"height":1024,"faceRestoration":"","upscaler":"","tiledDecoding":false,"resolutionDependentShift":false,"sharpness":0,"shift":1,"seedMode":2}

操作技巧

模型文件: 请确保你本地已下载 JSON 中对应的模型 (qwen_image_edit_2509_q6p.ckpt) 和 LoRA 文件。权重调整: 如果生成的全身图中面部相似度不够,可调整qwen_image_edit_face2p_lora_f16.ckpt 的权重(例如从 0.6 升至 0.7)。

导入配置: 在 Draw Things 软件中,点击左上角三个点并选择 “Paste Settings”(粘贴配置),即可一键应用以上所有参数。

-

2026-04-23 - 14:29 #131507

追光参与者四、模糊照片变清晰(照片修复与高清放大)

本工作流利用 Qwen 架构的图像编辑模型结合专用修复 LoRA,实现将模糊、低分辨率照片重塑为高清画质。

推荐模型搭配:

主模型: qwen_image_edit_2509加速 LoRA: qwen_image_edit_2509_lightning_4_step

修复 LoRA: lora_a_qwen_edit_enhance_64_v3_000001000

1. 核心模型与脚本流程

标准操作流程:

第一步(清晰化): 使用脚本 “enhance”,利用专用修复 LoRA 先将模糊的底图变清晰。第二步(放大): 清晰化后,再使用 “upscale” 脚本(如 Real-ESRGAN)做最后的放大处理。

Screenshot

2. 完整工作流配置 (JSON)

配置方案一:集成修复与放大(含 RestoreFormer 面部修复)

注意: 此配置中的 strength 设为约 0.7,适合在保持原图构图的基础上进行较强的画质重塑。{"seed":1295966564,"batchCount":1,"height":1024,"steps":4,"causalInferencePad":0,"sampler":12,"model":"qwen_image_edit_2509_q6p.ckpt","cfgZeroStar":false,"batchSize":1,"maskBlurOutset":0,"strength":0.69999999999999996,"guidanceScale":1,"cfgZeroInitSteps":0,"upscaler":"realesrgan_x4plus_f16.ckpt","faceRestoration":"restoreformer_v1.0_f16.ckpt","upscalerScaleFactor":0,"tiledDecoding":false,"tiledDiffusion":false,"shift":2.6555895999999999,"width":1024,"maskBlur":1.5,"loras":[{"mode":"all","file":"qwen_image_edit_2509_lightning_4_step_v1.0_lora_f16.ckpt","weight":1},{"mode":"all","file":"_lora_a_qwen_edit_enhance_64_v3_000001000_lora_f16.ckpt","weight":1}],"seedMode":2,"resolutionDependentShift":true,"controls":[],"hiresFix":false,"sharpness":0,"refinerModel":"","preserveOriginalAfterInpaint":true}

3. Qwen Upscale LoRA 使用参数参考与实测经验

加速LoRA方面既可使用Qwen Image Edit 2509 Lightning 4-step LoRA 也可使用Qwen Image 1.0 Lightning 4-Step v2.0 LoRA,实测效果相当,2509 Lightning 4-step LoRA效果稍微好一点,一致性保持更好。

使用参数参考(使用的2509 Lightning LoRA,案例使用4:3, 1024*768的尺寸,根据实际情况自行调整){"sampler":12,"sharpness":0,"loras":[{"mode":"base","file":"qwen_image_edit_2509_lightning_4_step_v1.0_lora_f16.ckpt","weight":1},{"mode":"all","file":"_lora_a_qwen_edit_enhance_64_v3_000001000_lora_f16.ckpt","weight":1}],"guidanceScale":1,"batchSize":1,"resolutionDependentShift":true,"height":768,"hiresFix":false,"seed":154690066,"maskBlurOutset":0,"faceRestoration":"","width":1024,"model":"qwen_image_edit_2509_q6p.ckpt","seedMode":2,"maskBlur":1.5,"upscaler":"","steps":4,"cfgZeroInitSteps":0,"shift":2.6555895999999999,"causalInferencePad":0,"cfgZeroStar":false,"tiledDecoding":false,"strength":1,"batchCount":1,"controls":[],"refinerModel":"","preserveOriginalAfterInpaint":true,"tiledDiffusion":false}提示词使用建议:

触发提示词:Enhance image quality,(在导入LoRA的时候,你就可以把这句直接放到触发提示词里面,省去了每次输入)在触发提示词后面:简单描述当前的图片信息即可,不要用指导性的话术,不然图片会按照你的指导变化。(如果再加上一句:“仅仅提升画质,画面人物构图动作全部不变”这样会更加保险)

-

2026-04-23 - 14:25 #131503

追光参与者三、 Draw Things替换人脸流程(Face Swap)

本流程介绍在 Draw Things 中进行精准人脸替换的两种主流方法,分别基于 Qwen 和 Flux 架构。

Screenshot

方法一:基于 qwen image edit2509/2511

此方法利用 Qwen 模型的图像编辑能力,通过遮罩区域结合 moodboard 进行替换。

操作步骤:

1.1 导入与涂抹: 将待换脸的图片(底图)导入到画布。在画布中使用画笔工具,将底图中需要替换的面部区域涂抹为绿色。

1.2 资源放置:

画布: 放置已涂抹绿色区域的底图(图1)。

Control (Moodboard): 在 ControlNet 的 Moodboard 单元中,放入目标人脸图片(参考图)(图2)。提示词参考:

英文提示词 (推荐):replace the green part with the face from picture 2

中文提示词:

把图1中的绿色区域替换为图2中的人脸,其它保持不变

方法二:基于 Flux Klein 直接替换

此方法利用 Flux 模型强大的语义理解能力,实现更自然的融合。

模型:FLUX.2 [klein] 9B (6-bit) (追求极致画质首选)/FLUX.2 [klein] 4B (6-bit) (兼顾速度与内存)

操作步骤:

画布: 在画布上放置待换脸的图片(底图)(图1)。

Control (Moodboard): 在 ControlNet 的 Moodboard 单元中,放入目标人脸图片(参考图)(图2)。

进阶操作 (可选): 可以先在底图面部进行轻微涂抹遮罩后再执行,也可以不涂抹直接执行提示词。提示词参考:

英文提示词:Replace the face with the face from picture 2. Keep the facial features the same while adapt the lighting and angle to the background environment

操作技巧:在进行人脸替换时,确保目标人脸图片(图2)清晰且光线正常,有助于模型更好地提取面部特征并融合到画布底图的光影环境中。

-

-

作者帖子